Introducing h-Logic

A New Way Forward via One Weird Trick

(This is my final philosophy article, the culmination of a decade of reading & research & discussion with all of you. Everything below draws upon that body of effort, so crucially impacted — whether inspired or corrected — by you bright folks I’ve come to call my friends over the years. I hope you find it worthy of something to “leave you with.”)

When you write “□φ” (“it is necessary that φ”) in modal logic, you’re expressing necessity, but which kind of necessity? Logical? Mathematical? Physical? Moral? The notation doesn’t say, so it’s up to context, convention, or footnotes to clarify.

Let’s look at some “musts”:

“2 + 2 must equal 4.”

“A ball thrown upward must come back down.”

“You must stop at red lights.”

“Bachelors must be unmarried.”

“She must truly love me.”

In each case the “must” is relative to some background norms, a set of expectation-like constraints they’re taking as fixed:

“2 + 2 must equal 4.” (… Per arithmetic axioms.)

“A ball thrown upward must come back down.” (… Per Earth’s gravity.)

“You must stop at red lights.” (… Per the demands of traffic laws.)

“Bachelors must be unmarried.” (… Per that definition of “bachelor.”)

“She must truly love me.” (… Per her behavior.)

But the bare “□” hides this dependency, even though that dependency is the very thing dictating what is required (or with “◊”; “what’s available”).

When philosophers develop modal logics for different domains, they make different background assumptions. Epistemic logic assumes knowledge is introspective and factual, deontic logic frequently invokes obligations sans obligators, temporal logic assumes time is linear or branching, physical modality assumes deterministic or probabilistic laws, etc. Each domain gets its own specialized machinery, its own axioms, its own proof systems.

The result is a proliferation of special-case modal logics, each tailored to particular assumptions, making it hard to compare across domains or handle cases where multiple modal varieties interact. While some work has been done in multimodal expressions, the cross-incompatibility of different approaches makes this a challenge, and a lack of unity means it’s difficult for insights in one to lead to the next.

What if we figured out a way to unify semantics, syntax, and a broad interpretive standard that worked across modalities, and where those implicit stipulations are instead specified? What if there were cool payoffs in doing so?

Enter h-Logic...

… a modal framework that does just that.

Instead of writing “□φ” and hoping the context disambiguates, h-Logic forces relativization on the necessity (□) and possibility (◊) operators, just like we do in epistemic modality, but everywhere.

Like this:

□hφ

As in:

□Arithmetic(2 + 2 = 4)

□GeneralRelativity(Nothing travels faster than c)

□MyMorningEvents(I had coffee)

The subscript names a “provisum,” a labeled set of constraints. The name “h” stands for “hereby,” as in, “This provisum hereby takes these constraints as fixed,” or maybe “hypothetical,” as in, “On this hypothesis, these are the rules & facts.” These constraints can express past conditions (“Jane already left for the restaurant”), future conditions (“the coin will land heads”), present conditions (“I’m sitting in a chair”), counterfactual conditionals (“If I had left earlier, I would have made it on time”), demands (“You will leave your sister alone”), or whatever else is modellable in the language.

On its face this is just being a jerk about notation, but it spawns a different way of thinking about modality, solves for a number of irritating undernotation issues (the upside of being a jerk about notation), and has various other payoffs:

No pretenses of absolute modal facts.

We stipulate provisa to serve our contextual expressions; there’s no “absolutely correct” one unless we wish to privilege one so.Modal varieties are unified (well, as much as we can get away with).

Mathematical necessity, physical law, moral obligation, epistemic data, and conceptual essence all use the same structure with no unique operators or specialized syntax, if we can help it.Cross-provisum comparisons are expressible.

“This is necessary in Newtonian physics but not in relativity” becomes □NewtonianPhysicsφ ∧ ¬□GeneralRelativityφ. In standard modal logic you’d need a meta-language but in h-Logic it’s just another formula. You’ll see how nifty this gets a little later.Hidden incongruences are visible.

My biggest nemesis of all, as you may already know. When people dispute whether something is “necessary” (or “possible”) they’re often using different provisa. Making this explicit can dissolve apparent contradictions and bring polysemy problems to the surface.

And more!

We’ll shortly see how mandatory subscripts lead to a distinctive modal logic framework, one that sidesteps traditional puzzles about possible worlds, handles counterfactuals through explicit provisum revision, makes conditional relevance visible through model structure, and unifies logical, mathematical, physical, normative, epistemic, and conceptual modality under one treatment.

Time to see what happens when we gotta tag our operators.

In This Article...

True philosophy nerds should glance at section 2’s tantalizing headers in order to aid their trudging survival through section 1. And this is because you cannot, must not, ought not, and shall not skip ahead per the bolded warning after this table of contents.

The Rundown

“Provisa” & “Constraints”

The Pitfalls We’re Dodging

The Formal System

Philosophical Payoffs

Cross-Provisum Comparisons

Scientific Theory Change

Epistemic Disagreement

Moral Disagreement & The Frege-Geach Problem

Counterfactuals & “Similarity”

Conditionals & “Relevance”

Free Will & Alternative Possibilities

Probability & Bertrand’s Paradox

Essence & Grounding

Persuasive Arguments as “Impelling” or “Inert”

Expressing Different Truth Theories

Conclusion

Inspirations

Invitations

In Summary

WARNING: This is meant to be read sequentially, with sections building on prior established tricks. This is not a quick read. It’s the product of years of stuff, so if you’re not in the mood to go deep, begone! Then come back later!

The Rundown

As mentioned in the intro, the fundamental move is pretty straightforward. Instead of writing:

□(2 + 2 = 4)

… and leaving implicit what kind of necessity this is, you write:

□Arithmetic(2 + 2 = 4)

The subscript (inspired by epistemic modality) names a provisum, our word for a labeled set of constraints by which the operator operates, which are just formulas we stipulate.

E.g., the arithmetic provisum might contain:

Arithmetic = {

"0 is a number",

"For every number n, S(n) is a number",

"n + 0 = n",

"n + S(m) = S(n + m)",

{the other Peano axioms}

}Given these constraints, certain things follow necessarily, like 2 + 2 = 4. Others don’t follow necessarily, like whether 64 is my favorite number (it is, though). And some formulas are ruled out per that provisum, like 2 + 2 = 5.

Q: How should we think of such constraints?

A: To get our intuition pumping, we can think of constraints like the provisum’s “expectations.” This is a bit of a language trick to unify interpretations across modalities and link to theories of truth that lean on the prospective (where the “expectance” is, “Start with a provisum, then test something per its restrictions”) as well as those that lean on the retrospective (where the expectance is, “What I have is, I assume, legit”). In our language habits we find a funny kind of variety whenever we’re pitting an operation against standards, e.g.,

Given the rules of Chess, you hereby…

Shall not (per what is predictively expected) move a pawn 3 spaces in a turn,

Ought not (owed to expectations) move a pawn 3 spaces in a turn,

and Cannot (potence/impotence as “not-ruled-out/ruled-out”; hereby, it is ruled out) move a pawn 3 spaces in a turn.

But also a bit of variety talking about present/past information, e.g.,

Given what I’m certain about, hereby…

X can’t be right… (X would violate what I expect is true)

… rather, it ought to be the case that Y (Y would accord to what I expect is true)

The “expectations” framing hogties these slippery language habits all in terms of articulable modal relata. And this in turn demystifies why we so often use “ought” language for non-behavioral expectations (“it oughtta rain soon”), “can” language for moral obligation/owing, etc.

For a programming metaphor, we’re hardcoding less, and making more of the logic data-driven, which gives us good reasons to use a weakly-typed architecture. Our loosey-goosey language gives us an opening to do so.

(Note: This all gives us license to smash “is” and “ought” together where they both express what follows from trusted or provisionally adopted expectations; the “supernormative ought” is just per the provisum you’re privileging, at which point Hume’s Guillotine rises between That One and the rest. You’ll see this “privileged provisum” pattern appear again and again as we explore more traditional philosophical issues.)

Q: So what is a provisum doing?

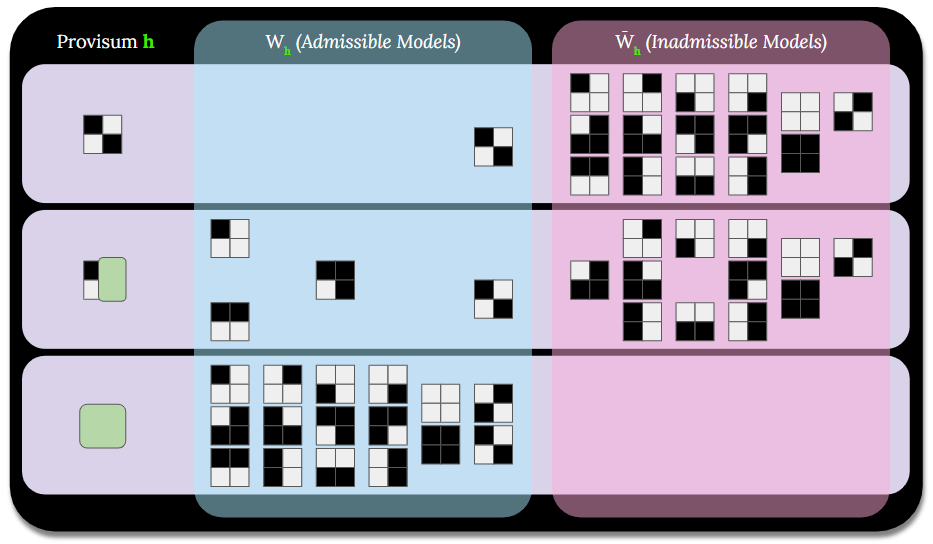

A: The provisum acts as a kind of “filter set,” winnowing down the number of admissible models, mathematical structures that satisfy all of the provisum’s expectations, hence why we are framing the provisum’s expectations as constraints:

□hφ means φ is true in every admissible model for provisum h.

◊hφ means φ is true in some admissible model for provisum h.

So we’re avoiding the fractal galaxies of possible worlds & accessibility relations, and staying flat with remnant models & set membership. There are some elegant things we can do with this.

Q: If necessity is “relative to provisa,” doesn’t that make everything arbitrary!?

A. NO! Within a provisum, modal facts are objective. Either φ follows from h’s constraints or it doesn’t, and that’s strictly determined by the mathematical structure Wh (the set of admissible models).

What h-Logic omits is claims about which provisum captures “real” necessity independent of our frameworks (and we’re going to drop modal axioms that try to pull this move).

(Note: Even so, folks who believe in theory-independent modal facts can retain that commitment through privileged provisa, while using h-Logic as a practical tool for organizing modal reasoning. h-Logic doesn’t force you to become a modal anti-realist, it just doesn’t require modal realism to function.)

The Pitfalls We’re Dodging

Pitfall #1: Totalizing Descriptions

Possible-world semantics tends to treat worlds as complete: Every proposition is either true or false at a world. This makes sense if you think of possible worlds as alternate realities. But when we’re reasoning modally in practice, we’re rarely working with complete descriptions. A mathematical axiom system doesn’t settle whether the physical constants have their actual values, a game’s rules don’t determine what color the board is, etc.

In h-Logic, provisa are as partial as you want. The intentional incompleteness makes provisa practical tools rather than grasping at transcendent perspectives.

Pitfall #2: Innately Privileged Actuality

In standard possible-world semantics, one world is special: The actual world. The T axiom (□φ → φ) says that whatever is necessary must be actual.

This sounds okay until you realize it presupposes we’re reasoning from within the actual world. What if we’re doing counterfactual reasoning? What if we’re exploring some odd, novel mathematical systems? What if we’re considering moral frameworks we don’t endorse? What if, heaven forbid, we want to stay humble about our confidence in what is actually the case?

So in h-Logic we punt the T axiom. You can have □hφ without φ being true in some privileged sense. Similarly we reject the B axiom; we work within provisa, and there’s no external standpoint from which to say “but is φ actually true?”

(But if you want to privilege some provisum in order to cash out afterward, go nuts.)

Pitfall #3: Hidden Relativity

Like we said in the intro, traditional notation makes necessity look absolute. When you write “□(bachelors are unmarried),” it seems like you’re stating a fact about the logical structure of reality itself. Only in footnotes do we learn this means “necessary given certain meanings of ‘bachelor’ and ‘unmarried,’” i.e., relative to semantic conventions, which can be different, if we see fit.

And this of course has been a major theme on the material we’ve dealt with thus far, with not just conceptual criteria, but other kinds of naming criteria, as well as identity & equivalence criteria.

This is all captured by our syntactically mandatory subscripts. Under h-Logic you just can’t write a necessity claim without specifying which provisum it’s relative to. This is enforced by the grammar, such that “□φ” without a subscript is a syntax error.

The notation becomes a little chunkier, but it stops “alien hand philosophy”: No longer can you accidentally convince yourself you’ve discovered absolute truths when you’ve merely worked out consequences of particular stipulations. And this is a very big payoff indeed if underspecification + overconfidence are driving heaps of viral yet aimless philosophy chatter.

Pitfall #4: Monolithic Modal Logic

Standard modal logic comes in flavors: K, T, S4, S5, and so forth. These correspond to different constraints on the accessibility relation (reflexivity, transitivity, symmetry, etc.). Some take this as that reality has one of these structures and we just need to figure out which.

But this assumes there’s a single correct modal structure to discover. Why should physical necessity, moral obligation, mathematical proof, and epistemic justification all follow the same logical patterns? They work quite differently in practice, after all.

In h-Logic, all single-provisum reasoning has Axioms 4 and 5 whereby iteration collapses (□h□hφ ↔ □hφ, ◊h□hφ→ □hφ, etc.) “automatically” for reasons that will be made clear shortly. Variety & fun instead comes from comparing different provisa, their impact on model spaces, and why different impacts occur.

Want to express that something is necessary in Newtonian Physics but not in General Relativity? Write:

□NewtonianPhysicsφ ∧ ¬□GeneralRelativityφ

And hereby this would be false:

□NewtonianPhysics□GeneralRelativityφ ↔ □GeneralRelativityφ,

… Because NewtonianPhysics does not constrain what GeneralRelativity constrains; it does not require GeneralRelativity to constrain anything.

This kind of cross-provisum comparison is expressively powerful and can’t be directly done in standard modal logic. The variety isn’t in different frame structures, but rather in the relationships between different sets of constraints.

The Formal System

Defining Provisa

A provisum h is a pair: (label, Γ) where Γ is a set of formulas.

We write φ ∈ h to mean “provisum h requires φ” or “φ is one of h’s expectations.” The constraints of h are exactly the formulas in the set Γ.

Example: Chess

Chess = {

The board is 8×8,

Pawns move forward one square (or two from start),

Rooks move horizontally or vertically any distance,

Kings move one square in any direction,

...

}So (The board is 8×8) ∈ Chess, (Pawns move forward) ∈ Chess, etc.

Example: Newtonian Physics

NewtonianPhysics = {

F = ma,

Every action has an equal and opposite reaction,

Gravity follows inverse-square law,

Time is absolute,

Space is absolute,

...

}Example: Peano Arithmetic

PeanoArithmetic = {

0 is a number,

If n is a number, then S(n) is a number,

For all n: n + 0 = n,

For all n, m: n + S(m) = S(n + m),

...

}These constraints need not be complete. The Chess provisum doesn’t set constraints about gravity and the NewtonianPhysics provisum doesn’t set constraints about legal Bishop moves.

Provisa are local, partial, and in most contexts purposive tools. This incompleteness is what makes them practical rather than unwieldy totalizing world-descriptions.

(Note: Sometimes it’s convenient to say a provisum h “expects provisum g” or “includes provisum g.” This is just shorthand for h containing all of g’s constraints: h = {P, Q, ...} ∪ g. So you can write things like “if R then (all of g’s constraints)” or manipulate g’s constraints as a unit (h ∪ g, h - g). The nesting is just organizational.)

Admissible Models

Given provisum h, the admissible models are:

Wh = {m : for all φ ∈ h, m ⊨ φ}

So, Wh is the set of all models that “survive” h’s constraints. You can think of it like h winnowing down models until only the ones it’s “cool with” remain. Modal operators then quantify over these admissible models.

Meanwhile, h’s inadmissible models are the complement of Wh:

W̄h = {m: there exists φ ∈ h such that m ⊭ φ}

Model Satisfaction

For any model m and provisum h:

m ⊨ □h φ exactly when: for every m’ in Wh, m’ ⊨ φ

m ⊨ ◊h φ exactly when: for some m’ in Wh, m’ ⊨ φ

These definitions don’t depend on which model m we’re looking at. The right side only refers to Wh (the admissible models for provisum h) and whether φ holds in those models. The choice of m is irrelevant. This means modal formulas with a given provisum subscript have uniform truth values; □h φ is either true in all models or true in none. Same for ◊h φ.

This is because “□h φ” asks: “Does φ hold in every model in Wh?” This question has the same answer no matter which model you’re asking from. You’re surveying the entire space Wh rather than checking local accessibility.

So whereas in standard possible-world semantics, “□φ” can be true at one world and false at another (because those worlds access different sets of worlds), in h-Logic, “□h φ” is either valid (true everywhere) or not valid (true nowhere). (Note: This uniformity holds given closed formulas, which we will be sticking with for the purposes of this article.)

Validity: ⊨ □h φ

I.e., “Every model satisfies □h φ.” Since □h φ has uniform truth value, this is equivalent to, “Every model in Wh satisfies φ.”

Consequence: h ⊢ φ

I.e., “φ is a consequence of h when ⊨ □h φ.” Or, “φ follows from h’s constraints exactly when φ is h-necessary.” (Note: This doesn’t mean φ is one of h’s constraints.)

PeanoArithmetic ⊢ (2 + 2 = 4) because ⊨ □PeanoArithmetic(2 + 2 = 4)

Chess ⊢ (bishops move diagonally) because ⊨ □Chess(bishops move diagonally)

RoyalLaw ⊢ (love one another) because ⊨ □RoyalLaw(love one another)

Duality: ◊h φ ↔ ¬□h ¬φ

I.e., “φ is possible per h” means “not-φ is not necessary per h”

Distribution (K axiom): □h(φ → ψ) → (□h φ → □h ψ)

I.e., if the conditional is necessary, it follows that if the antecedent is necessary, so is the consequent.

Necessitation: If φ is a tautology, then ⊨ □h φ

I.e., tautologies are necessary in every provisum; they hold in every model, so they hold in every admissible model, even if they seem unrelated, e.g., □Chess((it’s raining) ∨ ¬(it’s raining)).

Monotonicity: If g ⊆ h, then Wh ⊆ Wg

I.e., having the same constraints means having the same count of admissible models; but if h has all of g’s constraints plus more, then h allows as many or fewer admissible models (notice the “g” and “h” are switching sides).

Iteration Collapse

For any single provisum h:

□h □h φ ↔ □h φ (Axiom 4)

◊h ◊h φ ↔ ◊h φ

◊h φ → □h ◊h φ (Axiom 5)

These come for free because all □h operators quantify over the same set Wh. There’s no “accessibility between models,” we just have one global set of admissible models per provisum.

(“□h □h φ” says “in every admissible model m, it’s true that in every admissible model m’, φ holds.” But the inner quantification doesn’t depend on which model you’re in; it’s the same Wh regardless. So this collapses to “in every admissible model, φ holds,” or just “□h φ.”)

Similarly, “◊h φ → □h ◊h φ” holds because modal formulas have uniform truth values. If ◊h φ is true anywhere, it’s true everywhere, so □h ◊h φ follows.

This shows that h-Logic has a fundamentally different structure from Kripke semantics. In Kripke frames, you get different modal logics (K, T, S4, S5) by imposing different conditions on accessibility relations (reflexive, transitive, symmetric, Euclidean). h-Logic doesn’t have accessibility relations (just membership in Wh) so these iterations automatically collapse.

By contrast, this fails:

□h ◊h φ → ◊h □h φ

Even if φ is necessarily possible (holds in some h-admissible model), it needn’t be possibly necessary (there might be no way to restrict Wh to make φ hold in all remaining models).

E.g., let h = {there are exactly 3 gems}. Consider φ = “a gem is red.”

Wh contains models with 3 gems of various colors

◊h φ is true (some models have a red gem)

So □h ◊h φ is true (by uniformity)

But □h φ is false (not all models have a red gem)

So ◊h □h φ is false (no model makes □h φ true)

I hope that was intuitive enough. As you can see, the “action” in h-Logic comes from comparing admissible models per and/or across provisa. And the latter serves as the first stop in our adventures into h-Logic’s -= Philosophical Payoffs =-.

Cross-Provisum Comparisons

This is where h-Logic’s neat power emerges… but first, a straightforward example.

Let’s say that WholeNumbers are our provisum for arithmetic with just 0, 1, 2, 3, etc., and RationalNumbers are our provisum that includes fractions.

Since the WholeNumbers provisum is contained in RationalNumbers (rational arithmetic includes all whole number axioms plus more), we have:

□WholeNumbers φ → □RationalNumbers φ

I.e., “Every fact necessary for whole numbers remains necessary when we allow fractions.”

But rational numbers introduce operations whole numbers are silent about. We can express this directly:

□RationalNumbers(5 / 2 = 2.5) ∧ ¬□WholeNumbers(5 / 2 = 2.5)

I.e., "Dividing 5 by 2 yields 2.5 in rational arithmetic, but whole number arithmetic takes no position on this, since it doesn't have the expressive resources."

That make sense?

Standard modal logic can’t directly state “this is necessary in rational arithmetic but not in whole number arithmetic”; you’d need a meta-language. But in h-Logic, it’s just a boring formula.

This unlocks a bunch of nifty tricks.

Scientific Theory Change

For example, scientific theories can be framed as provisa with certain constraints (against which you can pit provisa from observations).

Roughly speaking, we can do stuff like:

□NewtonianPhysics(time is absolute) ∧ ¬□GeneralRelativity(time is absolute)

□GeneralRelativity(nothing travels faster than c) ∧ ¬□NewtonianPhysics(nothing travels faster than c)

□NewtonianPhysics(velocities compose additively) ∧ ¬□GeneralRelativity(velocities compose additively)

Scientific revolutions become changes in which provisum we adopt. And we can say things like, “Both theories agree on this consequence within the regime where an object’s velocity is much less than the speed of light,” by enriching the provisa with conditionals.

Given the above, I bet you see the next one coming (because it’s kind of the same thing).

Epistemic Disagreement

“Hey, when’s the meeting?” Quinn was told by Sean it’s on Tuesday or Wednesday, but doesn’t know which. Robert was told by Sean it’s on Tuesday. Both trust Sean.

Quinn’s knowledge provisum:

QuinnData = {

meeting is Tuesday or Wednesday

}Robert’s knowledge provisum:

RobertData = {

meeting is Tuesday

}For Quinn:

□QuinnData(the meeting is Tuesday or Wednesday); i.e., “Quinn knows it’s on either Tuesday or Wednesday.”

◊QuinnData(the meeting is Tuesday); i.e., “It could be Tuesday, as far as Quinn knows.”

◊QuinnData(the meeting is Wednesday); i.e., “It could be Wednesday, as far as Quinn knows.”

For Robert:

□RobertData(the meeting is Tuesday); i.e., “Robert knows it’s Tuesday.”

□RobertData(the meeting is Tuesday or Wednesday); i.e., “Robert also knows the disjunction (trivially)”

Both know it’s Tuesday or Wednesday, but Robert also knows it’s Tuesday. Robert has strictly more information than Quinn. His provisum contains hers plus additional specificity (that is, his knowledge admits fewer models).

Suppose Quinn gets Robert’s information. We do this by joining provisa.

Her new provisum:

QuinnDataUpdated = QuinnData ∪ RobertDataNow □QuinnDataUpdated(meeting is Tuesday). Easy!

h-Logic is in part motivated by epistemic fallibilism. There are various interpretations of fallibilism; I root for those where justification is relative to standards, and you can have false knowledge.

Meanwhile, a lot of literature on epistemic modal logic kept referring to “JTB” knowledge, as if that framing were foregone & problem-free. Not cool. Suffice it to say, this “T” is also punted in h-Logic (alongside Axiom T, as noted above).

This move is, to be sure, assertive. David Lewis wrote:

“If you are a contented fallibilist, be honest, be naive, hear it fresh: 'He knows but has not eliminated all possibilities of error.' Even if you've numbed your ears, doesn't this overt, explicit fallibilism still sound wrong?”

And yeah, it kind of does.

Nevertheless, in h-Logic we’re biting that bullet. So when we say □QuinnData φ means “Quinn knows φ” in h-Logic, this means φ follows from what Quinn currently takes as true given her justificatory standards, whatever they are. Quinn’s provisum can be incomplete or mistaken just like any other provisum.

Hence we’re only dealing with “JB” knowledge in h-Logic, and if we want, we can use different provisa to reflect a single individual’s knowledge per different justificatory standards, e.g., a provisum in which Sean’s word is not taken as justifying. (Remember I said they trusted Sean? Now consider a Shyamalan twist in which Sean gave them bad info, yet they trust him anyway and therefore they regard the info from him justified.)

Anyway, if you want JTB’s T back, just call a provisum “T” under h-Logic.

Moral Disagreement

Epistemic disagreement involves different information, but moral disagreement often persists even when all parties agree on the facts.

Consider battlefield triage. Two wounded soldiers: One ally with a minor injury, one enemy with a severe injury. Who do you treat first?

The Moral Provisa

HumanityFirst = {

prioritize by medical need alone

}ComradesFirst = {

prioritize allies irrespective of medical need

}The Situational Provisum

ThisBattlefield = {

comrade has minor injury,

enemy has severe injury; more medical need,

it's time to administer care!

} (Aside: How should we interpret a situational provisum’s constraints? Like this: “This provisum holds or expects that these things are so.” This is handy because different situational provisa can be used to represent different perspectives, like when my own eyewitness conflicts with someone else’s. Here, necessity is lower-case-t “truth, per.”)

We capture our dilemma by joining our situational provisum to directive provisa with set union:

□(ThisBattlefield ∪ HumanityFirst)(treat enemy first)

”You must treat that enemy soldier, now!”□(ThisBattlefield ∪ ComradesFirst)(treat comrade first)

”You must treat our own soldier, now!”

Two people giving the above conflicting advice may agree on all the facts (injuries, identities, options), but they’re disagreeing about which moral provisum to adopt. The appearance of contradiction comes from undernotation in deontic logic (“□(treat enemy first)” vs. “□(treat comrade first)”) as if obligation made sense with no obliging standards or norms, which it never did.

Okay, okay, so I just took a shot at moral realism there, but h-Logic provides some diplomatic insights:

Moral realism might emerge when a provisum is taken for granted so thoroughly that moral language & thinking evolve to omit explicit relativization. If nearly everyone detests kicking puppies for fun, why bother citing that norming care? Just cut to the constraint!

Or maybe there really are genuinely provisum-independent moral facts that HumanityFirst, or ComradesFirst, or some other provisum reflects. You can express them in a provisum (reflecting such facts) and h-Logic boots up a realist context with that provisum privileged.

Either way, we have a framework that accounts for not just moral realism and antirealism, but realisms & antirealisms broadly. Realism is a feature of contexts with privileged or implicit provisa. When you demote & explicate all provisa, the context is antireal, with external dependences we can chase & interrogate.

(Note: h-Logic's treatment of normative modality is compatible with and naturally models Allan Gibbard's expressivist metaethics. When Gibbard says normative claims express acceptance of norm-systems, h-Logic formalizes this as the constraints of provisa. Both avoid commitment to stance-independent moral facts while preserving objectivity within adopted frameworks.)

And treating moral statements as provisum-relative necessities has an additional payoff: It solves a long-standing problem for non-cognitivist ethics, called the Frege-Geach Problem.

Non-cognitivists claim moral statements don’t state facts; rather, ”stealing is wrong” expresses disapproval or prescribes behavior (“Boo stealing!”).

But then how do moral statements embed in logical contexts?

Conditional: “If stealing is wrong, then getting your friend to steal is wrong”

Negation: “It’s not the case that stealing is wrong”

Question: “Is stealing wrong?”

Belief: “Edgar believes stealing is wrong”

If “stealing is wrong” just means “Boo stealing!” then “If stealing is wrong...” becomes “If boo stealing...” which is nonsense; logical contexts need truth-apt components.

But with h-Logic’s constraints, we can analyze both “stealing is wrong” and “boo stealing” as □h(¬steal); which is to say, you’re expected not to steal according to a directive provisum that one doesn’t steal. Whether you want to characterize this as a cognitive phrase or just a non-cognitive “thumbs down & frown” is your call; either way, as long as it’s a constraint, we can then express it as a truth-apt modal proposition under h-Logic, whereby embeddings work:

□h(¬steal) → □h(¬(encourage friend to steal))

Neat! But is the above valid? Well, no actually, and it shouldn’t be. A provisum listing {¬steal, ¬lie, ¬bully} might not explicitly forbid encouraging others to do these things. If a moral provisum wants to prohibit that, it has to say so:

h = {

¬steal,

¬lie,

¬bully,

∀φ(¬φ → ¬encourage(φ)), // A.k.a., ¬scandalize

...

}Now the above conditional is valid, since the provisum contains both the specific prohibition and the generalization principle that extends it to complicity. And again, it’s up to you if you want to say this as, “That is forbidden!” or “I expect you not to do that!” or “Boo to that!”

Many of us have strong feelings that this constraint against scandalization should come “for free.” But it really shouldn’t, and h-Logic helps us see that by forcing us to write everything relevant down. You can have a prohibition that applies to you alone that does not automatically forbid encouraging others to do that thing, e.g.,

MyJobExpectations = {

¬(access the server closet),

...

}Just because I’m not allowed to access the server closet doesn’t mean it would be wrong, or violate expectations, for me to encourage the IT guy to access the server closet.

The Frege-Geach Problem has been a thorn in expressivism's side since Peter Geach posed it in 1965. Attempts to address it have been hit & miss. Now, through its modularity, h-Logic offers machinery for moral expression in all the forms it takes. We get truth-aptness, since (e.g.) □h(¬steal) is true or false, yet this can be expressed as “Boo stealing!” Embeddings work, and can appear in conditionals, negations, belief reports, etc. Provisa aren’t privileged (per moral anti-realism) unless they’re privileged (yielding a local moral realism). And we’ve accounted for variation, disagreement, and are prepared for whatever pluralism throws at us.

We did it, everyone.

But we’re not done yet.

Counterfactuals & “Similarity”

Having seen how cross-provisum comparisons and extensions work, we’re ready to tackle one of modal logic’s trickiest applications: Counterfactual reasoning. What does it mean to say “if things had been different, blah blah blah would follow”?

Well, it’s not so tricky anymore. Counterfactuals have a natural analysis in h-Logic using provisum extension with explicit conflict removal when needed.

Example With No Conflict

Suppose we’re working within a provisum about Roshambo (Rock, Paper, Scissors):

Roshambo = {

two players,

three options per round: rock, paper, scissors,

players reveal their option simultaneously,

rock beats scissors,

scissors beats paper,

paper beats rock,

same choice means tie

}We want to answer, “If we played best-of-three instead of single round, would rock still beat scissors?”

To do so we extend Roshambo with the new constraint using set union, like we did in the moral battlefield situation. Since “best-of-three format” doesn’t conflict with any existing constraint, we can simply add it. The counterfactual becomes:

□(Roshambo ∪ {best-of-three})(rock beats scissors)

When φ doesn’t create conflicts, we have an equivalence:

⊨ □_(h ∪ {φ}) ψ iff ⊨ □_h(φ → ψ)

Hence our counterfactual reduces to checking whether □Roshambo(best-of-three → rock beats scissors). Since the game rules don’t depend on format, this holds. So the counterfactual is true.

(You might wonder about “best-of-three → rock beats scissors,” since the Roshambo provision said nothing about best-of-three. This is a quirk of the material conditional, which is true whether either of the following is the case:

“best-of-three” is false, making the conditional vacuously true, OR

“best-of-three” is true AND “rock beats scissors” is true

Don’t get hung up here; we’ll explore how h-Logic helps with conditional relevance later.)

Now, the above example was pretty easy, because our extension lived in harmony with the Roshambo provision. But what if a baseline provisum explicitly includes information that conflicts with the counterfactual conditional?

Example With Conflict

Round1 = {

Roshambo,

Alice played rock,

Bob played paper

}Alice complains, “If I had played paper instead of rock, I would have won!”

Adding “Alice played paper” conflicts with “Alice played rock.” Simply adding it would create an inconsistent provisum, one with no admissible models (Wh = ∅) since no models can satisfy both constraints simultaneously.

We need explicit conflict removal using the subtraction operation:

h - {ψ₁, ψ₂, ...}

This removes specified constraint from h.

For Alice’s counterfactual:

Round1ButPaper = (Round1 - {Alice played rock}) ∪ {Alice played paper}First remove the conflicting constraints, then add the counterfactual antecedent.

Now we can check if □Round1ButPaper(Alice won) is true.

□Round1ButPaper(Alice paper ∧ Bob paper → tie) → ¬□Round1ButPaper(Alice won)

Uh oh. That’s a tie, not a win, hence Alice’s counterfactual is false.

Cool, huh?

It’s important to point out that different choices of what to remove yield different outcomes, and there’s no absolute sense of “minimal change.” Fortunately for us, underdetermination in counterfactual reasoning is a risk that h-Logic is well-equipped to take seriously.

Example with Underdetermination

Round2 = {

Roshambo,

Alice played rock,

Bob played paper

}Alice now claims: “If I hadn’t played rock, I would have won!”

The antecedent “Alice didn’t play rock” doesn’t specify what Alice played instead. We must choose.

Round2ButScissors = (Round2 - {Alice played rock}) ∪ {Alice played scissors}□Round2ButScissors(Alice scissors ∧ Bob paper → Alice won)

→ □Round2ButScissors(Alice won)

Here, Alice wins! Alice’s counterfactual is true under this revision.

Round2ButPaper = (Round2 - {Alice played rock}) ∪ {Alice played paper}□Round2ButPaper(Alice paper ∧ Bob paper → tie)

→ ¬□Round2ButPaper(Alice won)

A tie, like in our first example. Alice doesn’t win here, so the counterfactual is false under this revision.

And hence Alice’s counterfactual “I would have won” is underdetermined, true if she would have played scissors, but false if she would have played paper.

This demonstrates 3 useful evaluations of counterfactuals in h-Logic:

Simple underdetermination.

Different revisions can make a counterfactual true or false.Partial vindication.

A counterfactual can be true under some but not all revisions.Complete falsification.

A counterfactual can be false under all reasonable revisions.

The user must explicitly decide which conflict-resolution strategy to use, and h-Logic is good about showing what follows from each choice.

As stated, there is no absolute sense of “minimal change” here. David Lewis’s account of counterfactuals involved finding the “closest” possible world where the antecedent is true, judged by a similarity metric. This involved small “miracles,” localized violations of actual laws to make the antecedent true without disturbing things too much.

h-Logic’s revision operations are like these “miracles.” The difference is that we’re going to be specific about the magic we wrought, because we don’t consider phrases like “small” and “too much” to be safe enough for serious specification. If you want to employ some criteria to judge similarity (or the size or the relevance) of your intervention, do it with another provisum.

And this is also the tack we’ll take when determining conditional “relevance,” in the section happening… NOW!

Conditionals & “Relevance”

Distinguishing relevant from irrelevant conditionals is a persistent problem in modal logic, especially when cashing it out with real-world interpretation. The material conditional φ → ψ is true whenever φ is false or ψ is true, leading to seriously weird-sounding stuff:

“If the moon is made of cheese, then 2 + 2 = 4”

Here, the antecedent is false, so this material conditional is true.

But this sounds like the moon being made of cheese is making 2 + 2 = 4 true, which is stupid.

“If Paris is in France, then grass is green”

Here, both the antecedent & consequent are true, so this material conditional is true.

But this sounds like Paris being in France is what makes grass green, which is stupid.

Strict implication □(φ → ψ) helps slightly, but still allows irrelevancies when φ is impossible or when ψ is tautological.

Meanwhile, h-Logic provides an elegant solution through provisum extension. The conditional’s relevance becomes visible in the structure of admissible models.

Consider the extension property we saw in counterfactuals. When φ is consistent with h (i.e., W(h ∪ {φ}) ≠ ∅):

⊨ □(h ∪ {φ}) ψ iff ⊨ □h(φ → ψ)

This tells us ψ follows from adding φ to h’s constraints exactly when the material conditional φ → ψ is h-necessary.

But the left side makes the dependence structure explicit:

Start with Wh (models admissible under h)

Restrict to W(h ∪ {φ}) (models that also satisfy φ)

Check if ψ holds in all of these restricted models

The conditional is relevant when this restriction “matters”; when adding φ constrains admissible models. This in turn helps us detect irrelevant conditionals.

(Note: The consistency requirement matters, since if φ contradicts h, then W(h ∪ {φ}) = ∅, making □(h ∪ {φ}) ψ vacuously true for any ψ, even absurdities. The material conditional on the right side won't always be vacuously true in the same way, so the equivalence breaks down. This is why we needed explicit conflict removal for counterfactuals earlier.)

Example When ψ is Already Necessary

Let’s do the moon one. “If the moon is made of cheese, then 2 + 2 = 4” feels irrelevant even though it’s true per the material conditional.

Arithmetic = {Peano axioms}

φ = “moon is made of cheese”

ψ = “2 + 2 = 4”We ask:

⊨ □Arithmetic φ?

No (since the moon’s composition not in arithmetic).

⊨ □Arithmetic ψ?

Yes (2 + 2 = 4 follows from Peano axioms)

When □h ψ holds but □h φ doesn’t, the conditional is irrelevant; φ isn’t “doing any work.” ψ was already necessary regardless of φ. Since W(Arithmetic ∪ {φ}) ⊆ WArithmetic and ψ holds in all of WArithmetic, adding φ doesn’t constrain admissible models. The moon’s composition is irrelevant to Arithmetic’s mandates.

Example When Both are True by Happenstance

Now let’s do the Paris one: “If Paris is in France, then grass is green.” Both antecedent & consequent are true, but there’s no relevant connection.

h = {

Paris is in France,

grass is green,

water is wet,

...

} Here we ask, “What provisa vindicate these constraints? Are they different?”

And, of course, they are.

Geography = {European cities' locations, ...}

Botany = {plant properties, plant types, chlorophyll making things green under normal conditions, ...}We ask:

⊨ □_(Geography ∪ {Paris in France}) (grass green)?

No.⊨ □_(Botany ∪ {grass green}) (Paris in France)?

No.

So, these constraints trace to different vindicating provisa. Neither necessitates the other. Hence to establish relevant necessitation, you need vindication, that is, upstream provisa wherein those constraints were necessitated, and then you observe whether those provisa are related or unrelated to one another.

Example with Relevant Connection

Here we’re interested in the case where φ is consistent with h, ψ is not already h-necessary, but adding φ makes ψ necessary.

Here we’ll use Roshambo for h, “Alice plays rock & Bob plays scissors” for φ, and “Alice wins” for ψ. So, “Alice plays rock & Bob plays scissors” is consistent with Roshambo, “Alice wins” is not already Roshambo-necessary, but adding “Alice plays rock & Bob plays scissors” to Roshambo makes “Alice wins” necessary.

We just ask:

⊨ □Roshambo (Alice plays rock & Bob plays scissors)?

No (since there are many possible action states).

⊨ □Roshambo (Alice wins)?

No (since there are many possible winner states).⊨ □(Roshambo ∪ {Alice plays rock & Bob plays scissors}) (Alice wins)?

Yes.

This tells us that φ does “legit work.” It constrains WRoshambo to only models where Alice plays rock and Bob plays scissors, and in all such models, Alice wins. Ergo, the conditional is relevant.

To review, we want to know if φ → ψ is a relevant conditional. h-Logic has us ask:

Is ψ already h-necessary? If yes, then it’s not relevant since the consequent holds regardless of the antecedent.

Do φ and ψ trace to different vindicating provisa? If yes, then there’s no structural connection, like entailment or causation; it’s just happenstance.

Traditional modal logic omits provisum-relativity so these diagnoses can be difficult, like finding your keys with the lights off. And I hope you see this as a recurring theme: What starts as a widespread & iffy habit of undernotation in modal logic leads to trouble as soon as things get complicated. h-Logic’s niddling requirements make our lives harder initially, but life gets better later, exactly like good coding conventions.

But it also makes clear where we have to do manual labor in order to get it right. We’re the ones choosing the provisa and the constraints in those provisa need to be exhaustive (as far as we care). Relevance is a function of what’s relevant to us. Only then can the above diagnostics be used to check whether a conditional is doing relevant work.

Free Will & Alternative Possibilities

With counterfactuals, conditionals, and relevance, let’s get ambitious for a minute and go after a bigger topic.

The Principle of Alternative Possibilities (PAP) says you’re morally responsible only if you “can have done otherwise.” Many incompatibilists argue that determinism rules out alternative possibilities, and therefore that determinism rules out responsibility.

h-Logic reveals the real issue isn’t determinism, it’s hidden provisum-relativity in “can” (and this affects all potency terms).

Let’s say you chose chocolate ice cream at 2:20. Our situational provisum at 2:21, given determinism, includes these:

PostChoiceDeterministic = {

determinism holds,

all past events up to (but excluding) 2:20,

you chose chocolate at 2:20,

...

}So, can you have done otherwise?

◊PostChoiceDeterministic(you chose vanilla at 2:20)?

No. Given determinism plus past plus laws, WPostChoiceDeterministic contains only models where you chose chocolate. Our incompatibilist says, “See? Determinism rules out alternate possibilities.”

But let’s remove determinism:

PostChoiceIndeterministic = {

all past events up to (but excluding) 2:20,

you chose chocolate at 2:20,

...

}Now can you have done otherwise?

◊PostChoiceIndeterministic(you chose vanilla at 2:20)?

Well… uh… no! Once chocolate-choosing is past, it’s a constraint within your provisum. WPostChoiceIndeterministic contains only models where you chose chocolate, because that’s what happened.

So on these post-choice provisa, determinism makes no difference. The past is fixed not because of causal laws, but because it’s past. If φ ∈ h as a past fact, then ¬◊h(¬φ).

By the time we call you to account for your evil chocolate choice, the action is already necessary relative to any provisum that includes it happening. So determinism is a red herring.

But, of course, there are different meanings of “can have done otherwise.” Let’s slice them apart. We saw that “can have done otherwise” can’t mean ◊PostChoice(did otherwise); that’s always false. So it must mean, “If circumstances had been relevantly different, would you have chosen differently?”

And we can model that like we did earlier, in the section on counterfactuals, by granting different deliberation in our “holodeck simulation”:

counterfactual = (post_choice - {chose chocolate, some past facts})

∪ {deliberated differently, chose vanilla}And now ◊Counterfactual(you chose vanilla at 2:20) is true.

So the repaired PAP is that you’re properly held responsible if ◊Counterfactual(you did otherwise), where the counterfactual alters deliberation/circumstances as relevant. Like we saw with conditional relevance, this will depend on what you deem relevant, particularly if you’re the one who assigned the responsibility in the first place.

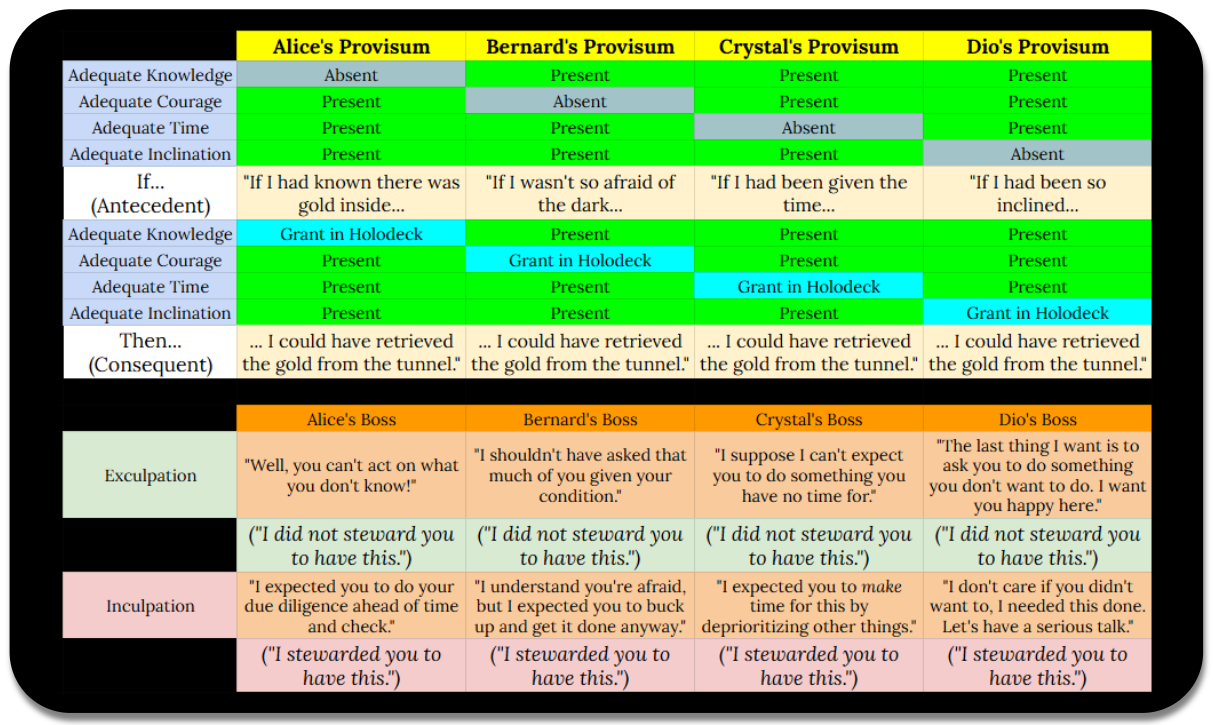

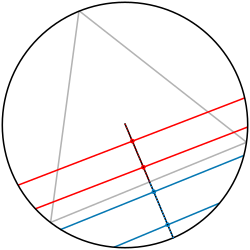

Below we have 4 situational (result) provisa for 4 different employees who all failed to deliver on some job, and 4 counterfactual provisa tailored to each of their shortcomings. Whether they are held culpable for the failure will depend not just on the truth of the counterfactual, but whether they, who assigned responsibility, considered that counterfactual relevant:

Savvy readers may be anticipating that you can model the accounting provisa as well, but I’ll leave that effort to whoever wants it.

This all leaves us with a meaning of morally-significant free will that properly relativizes significance [to the job] and freedom (against the presence of significant [to the job] interference).

And now we’ve escaped the maze of endless conceptual analysis and limitless edge cases and counterexamples.

Verily, h-Logic can handle Frankfurt cases without “bullshit.”

Probability & Bertrand’s Paradox

So far we’ve relativized our diamonds & squares, but we’re stuck with bivalent constraints doing model filtration. How might we handle probabilistic constraints?

One way to interpret probability in h-Logic is as a measure over admissible models. We assign a probability measure to Wh, then define Ph(φ) as the measure of φ-satisfying models in Wh, relative to the total measure of Wh.

And this shows why the same event can have different probabilities for different agents: They’re working with different epistemic provisa.

For finite cases with no reason to favor one model over another, this reduces to a simple ratio of model counts:

Ph(φ) = |{m ∈ Wh : m ⊨ φ}| / |Wh|

Let’s say you shuffle a standard deck and look at the top card: King of Diamonds. Meanwhile, Sven is betting on the top card’s suit without having seen it.

Your Provisum:

You = {

52-card deck,

Standard suits/ranks,

Deck shuffled,

Top card is King of Diamonds

}Sven’s Provisum:

Sven = {

52-card deck,

Standard suits/ranks,

Deck shuffled

}WYou contains only models where the top card is King of Diamonds; in all of these models, the top card is a diamond.

PYou (the top card is a diamond)

= |{m ∈ WYou : top card is diamond}| / |WYou|

= 1/1

= 100%

Meanwhile, WSven contains 52 models (one for each possible top card). Only 13 of those models have a diamond card on top.

PSven (the top card is a diamond)

= |{m ∈ WSven : top card is diamond}| / |WSven|

= 13/52

= 25%

(Note: If you want, you can do everything through Ph since □h φ just means Phφ = 1 (all models in Wh satisfy φ), ◊h φ just means Phφ > 0 (some models in Wh satisfy φ), and ¬◊h φ just means Phφ = 0 (no models in Wh satisfy φ). I don’t want the import of this observation to be lost on readers; it is broadly recognized that probability depends on priors, and in exactly the same way, possibility & necessity should have always been relativized to provisa.)

To enlighten Sven’s provisum, we do the same set union trick we did with the Battlefield situation and the Roshambo counterfactuals:

P{h ∪ {ψ}}(φ)

= |{m ∈ Wh : m ⊨ φ ∧ ψ}| / |{m ∈ Wh : m ⊨ ψ}|

Notice that this is basically the same as Bayesian conditioning…

P(φ | ψ)

= P(φ ∧ ψ) / P(ψ)

… with the difference that we’re explicating not just the update, but what we started with, too.

(Note: Some might ask, “Hey, what’s the real probability?” But you know h-Logic’s answer: We’re not dealing with provisum-independent probability, just as there’s no provisum-independent necessity. However, just like our earlier diplomacy with moral realists, users who affirm objective chance can model this with a privileged “physical” provisum with h-Logic, and can of course simulate alternatives.)

In the above, we had equal shots of all the cards. But sometimes there are reasons to favor one model or another, like in the case of a loaded die, such that measures are crucial.

Now let’s look at a payoff of explicitly relativizing probability.

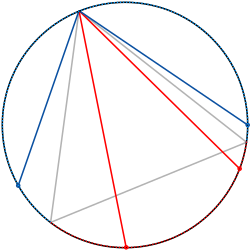

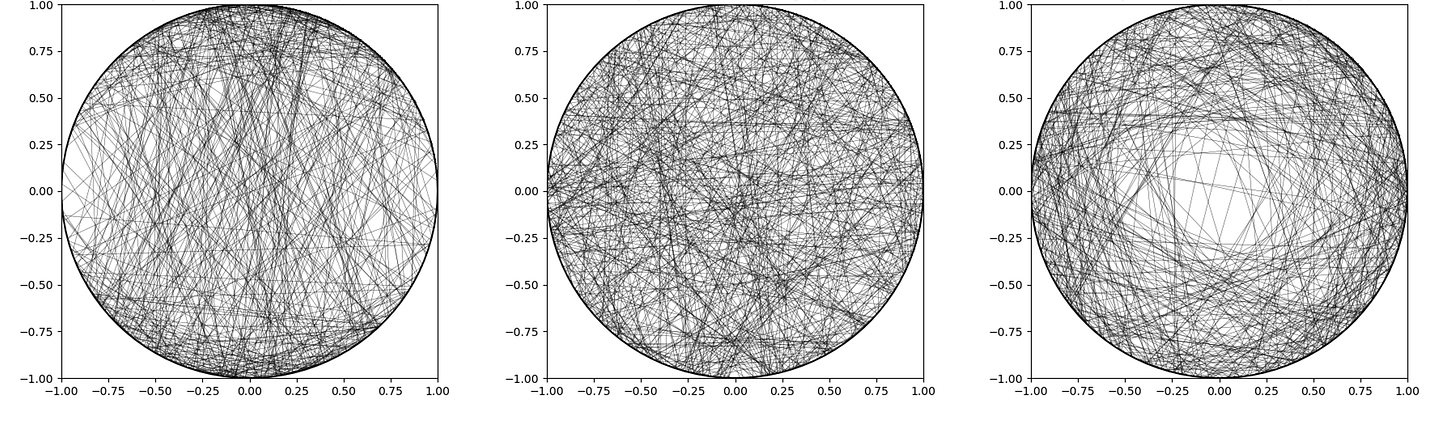

Classical probability theory uses the Principle of Indifference: If you have no reason to favor one outcome over another, you assign them equal probabilities. But this principle faces a notorious problem, in that it can give different distributions depending on how you build your RNG (so to speak). Way back in 1889, Joseph Bertrand gave us his paradox: “A chord is randomly drawn in a circle. What’s the probability it’s longer than the side of an inscribed equilateral triangle?” Then we see that 3 different answers satisfy the Principle of Indifference, yet give different probabilities:

Method 1: Random Endpoints on the Circumference

ChordByEndpoints = {

circle of radius r,

choose two points uniformly on circumference,

chord connects them,

...

}Per Method 1, P = 1/3.

Method 2: Random Radius and Angle

ChordByRadius = {

circle of radius r,

choose random radius uniformly,

choose random perpendicular chord,

...

}Per Method 2, P = 1/2.

Method 3: Random Midpoint Inside the Circle

ChordByMidpoint = {

circle of radius r,

choose point uniformly inside circle,

chord has this as midpoint,

...

}Per Method 3, P = 1/4.

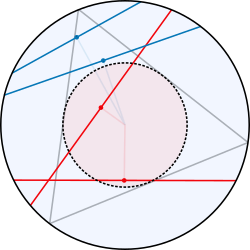

That is, all three methods are “Indifferent” in terms of uniform distribution of choice, yet they produce different “heatmaps”:

But by expressing these as different provisa with different model spaces, the reason why this is happening is obvious: There is no “the” probability of a random chord, instead there’s PChordByEndpoints, PChordByMidpoints, and PChordByRadius:

PChordByEndpoints(chord > triangle side)

= |W(ChordByEndpoints ∪ {chord > triangle side})| / |WChordByEndpoints|

= 1/3PChordByMidpoint(chord > triangle side)

= |W(ChordByMidpoint ∪ {chord > triangle side})| / |WChordByMidpoint|

= 1/4PChordByRadius(chord > triangle side)

= |W(ChordByRadius ∪ {chord > triangle side})| / |WChordByRadius|

= 1/2

The different provisa generate different model spaces, so the same event restricts those spaces differently, yielding different probabilities. As we discussed in the prior note, none of those probabilities are capital-R “Real,” but they’re lower-case-r “real per” the provisa specifying the constraints by which they are determined.

This comes up again and again in probability theory. Apparent paradoxes can arise from implicit ambiguities about reference classes, sampling procedures, generating processes, etc. h-Logic clears the air through provisum specification.

Essence & Grounding

Now that we’re acclimated to using provisa as the “basis” for evaluation, we can get to the “bottom” of conceptual evaluation, and see how it brings clarity to metaphysical puzzles that just needed better specification.

Kit Fine argued that traditional necessity can’t capture essence or grounding. It’s necessary that Socrates is a member of {Socrates}, but not essential to Socrates; rather, it’s essential to the set. And the set {Socrates} depends on Socrates, not vice versa, even though necessity goes both ways.

h-Logic handles both by making essence provisa explicit and distinguishing internal from external grounding.

First we’ll talk about h-Logic’s way of framing essence, and a few related issues (vagueness, rigid designation, and qualitative equivalence). Once we’re comfy with provisa as the grounds of essence, we’ll be ready to talk about how this reframes (in a satisfying way!) the goals behind grounding (as an act).

Essence as Definitional Provisa

Have Triangle be the provisum containing criteria for counting as a triangle:

Triangle = {

has exactly three sides,

is a closed polygon,

sides are straight line segments

}These are all the things that are essential to any Triangle, like, bare minimums (err, minima?).

□Triangle(has three sides)

I.e., “To count as a triangle, something must have 3 sides.”

I.e., “Having 3 sides is essential to being a triangle.”

But…

¬□Triangle(is equilateral)

I.e., “Being equilateral is not essential to being a triangle.”

Instead, having equal sides would be an “accident” of triangularity:

◊Triangle(is equilateral)

I.e., “A triangle can have equivalent side lengths, but it doesn’t have to.”

Triangularity “has essence” only insofar as it’s what we’ve stipulated as the membership criteria for the category “Triangle.”

Definitional Change as Shifting Essence

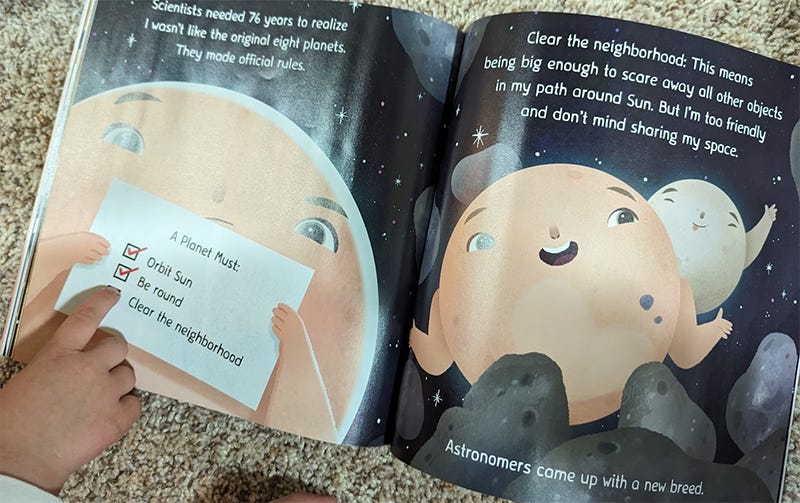

Remember when this happened?

Planet1930 = {

orbits the sun,

is roughly spherical,

...

}

Planet2006 = {

orbits the sun,

is roughly spherical,

has cleared its orbital neighborhood,

...

}Poor Pluto; □Planet1930(Pluto is a planet), but sadly, □Planet2006¬(Pluto is a planet).

Did we find out that Pluto isn’t “really” a planet? That’s a weird framing; most boringly, it’s just a decision to use different definitional criteria. But you can characterize it that way if you want, if “really” is doing the job of raising the criteria bar (and “truly,” “really,” “genuinely,” etc. are quite often doing this very thing in common & philosophical usages).

There will be some who scoff at the prospect of “shifting essence.” Our reply (like a broken record, at this point) is that those with essentialist dispositions can just privilege certain membership provisa as immutable (like criteria to count as “Triangle”) while allowing that other provisa can be shifty (like criteria to count as “Miniature American Shepherd”).

Handling Fuzzy Criteria

If “bald” is vague, different provisa can implement different standards:

Baldness1 = {has fewer than 50 hairs per square inch}

Baldness2 = {has fewer than 200 hairs per square inch}

Baldness3 = {I judge them bald on sight}So □Baldness1(Horace is bald) might hold while □Baldness2(Horace is bald) or □Baldness3(Horace is bald) don’t.

This handles the issue of vague sortals having no absolute criteria. Different provisa implement different precisifications of “bald,” “heap,” “tall,” “child,” “red,” etc. There’s no privileged provisum that captures what the sortal “really” means (unless we elect to privilege one).

So h-Logic doesn’t “solve” vagueness or something, you just have to be explicit (to the satisfaction of those involved) about which precisification you’re using. And since some rough test (like that contained in Baldness3) can spit out bivalent “pass/fail,” we’re able to build & use precise models while retaining traction upon what Wittgenstein called “rough ground.”

And now we’re ready to turn back to David Lewis’s notion of “similarity,” and see how this framing of essence helps tackle the problem of…

Rigid Designation (or Lack Thereof)

When we say “If Nixon had been a used car salesman...”, we feel like we want to track “Nixon himself” across scenarios, not just someone similar to Nixon. But the world that’s “closest” per whatever criteria might not preserve what we see as essential to Nixon per identification criteria.

Kripke’s examples highlight this. “If Nixon had lost the 1968 election” seems fine at first glance; it’s the same Nixon suffering an alternative history like something out of MCU. But “If Nixon had been a poached egg” looks pretty dumb, yeah? We think of some stuff (like that he’s a dude) as essential.

Lewis then asked, “Which properties are essential? How does the similarity metric weight them?”

With h-Logic, we just explicate.

NixonEssence = {

Nixon was born on January 9, 1913,

Nixon's biological parents were Francis and Hannah,

Nixon was human,

...

}Here, these constraints are defining Nixon. Models that don’t satisfy them aren’t Nixon-models.

“Could he have been a used car salesman?” is just asking if being a used car salesman is an admissible model per the provisum (“can/could” is just possibility, remember?).

◊NixonEssence(was a used car salesman)?

And the answer is “yes.”

Meanwhile, “Could he have been an armadillo?” is a “no,” because that conflicts with the provisum.

But you don’t have to use that provisum. In my career I’ve been a software engineer, a product analyst, and a design director. These experiences made me “who I am today,” and if you want, you can call those “defining.” And if you do, and use such a provisum, then…

◊StanEssence¬(was a coder)?

… would be false.

The rigidity of designation was, and always was, a function of the opt-in tolerances of identification. h-Logic gives us a way to effortlessly model this, which equips us to avoid the quicksand of underspecified similarity.

This preps us for the next move:

Relativizing Equivalence

Normie logic treats identity (=) as all-or-nothing, but many practical questions ask about qualitative equivalence; are these the same in ways that matter to us, in our context?

h-Logic handles this by making explicit which features the equivalence cares about, slapping a subscript on the equal sign, “overloading” it (to borrow terminology from software programming).

The definition:

x =h y

I.e., “x equals y per constraints of h”

… holds when:

∀φ ∈ h: (x ⊨ φ ↔ y ⊨ φ)

“For all constraints in h, x satisfies it if and only if y satisfies it.”

The provisum h specifies which features are relevant. Different provisa check different features, yielding different equivalence relations.

Application to Theseus’s Ship

Theseus’s Ship has all its planks replaced over time. Is the rebuilt ship “the same ship” as the original?

Provisum 1: Material Stuff

MaterialStuff = {

planks,

bolts,

rope,

mast,

...

}We ask:

OriginalShip =MaterialStuff RebuiltShip?

I.e., ∀φ ∈ MaterialStuff: (OriginalShip ⊨ φ ↔ RebuiltShip ⊨ φ)?

No; the rebuilt ship has different planks and whatnot.

Provisum 2: Important to Me

ImportantToMe = {

carries Theseus's people & cargo,

Theseus regards himself as owner,

rewind only acts I count as "repairs" (if any) and you'll end up with the original ship in terms of material stuff

}We ask:

OriginalShip =ImportantToMe RebuiltShip?

I.e., ∀φ ∈ ImportantToMe: (OriginalShip ⊨ φ ↔ RebuiltShip ⊨ φ)?

Does it carry Theseus’s people & cargo? Yes. Does Theseus regard it as his? Yes. If we rewind through acts I count as “repairs” (if any) do we get back to the original ship re: material stuff? Yes.

The rebuilt ship is the sameImportantToMe ship.

(Note: We’re shortcutting, but you can see deeper opportunity to specify provisa here. First, you can interrogate my provisum to count something as a “repair”; some such provisa would disqualify Hobbes’s “Two Ships,” some would disqualify “Upgraded Ships,” etc. Second, the “rewind” constraint can use the earlier MaterialStuff equivalence to check.)

The general pattern is that, for any “Are x and y the same C?” question:

Define provisum h with features that matter for being “the same C”

Then ask “x =h y?”, i.e., “∀φ ∈ h: (x ⊨ φ ↔ y ⊨ φ)?”

This works for all of these classic “stumpers” that continue to drive viral ant mills:

“Am I the same person after memory loss?”

“Am I the same person I was as a teenager?”

“Is it the same God across these two religions?”

“Are these two organisms the same species after allopatry?”

“Is that the same piece of art after its restoration?”

The issue isn’t that nobody figured this kind of dissolution out. Rather, it’s that this kind of dissolution kills the party and cannot self-boost (“Dead conversations tell no tales”), whereas the chase for conceptually absolute criteria (“the right provisum”) is a self-boosting rager.

h-Logic helps here by giving us an exciting way to model and express such dissolutions: It ends the party by doing a cannonball in the pool and drenching the guy harassing everyone, and that is rad.

Qualitative equivalence is always provisum-relative. These identity puzzles dissolve into explicit choices about which features we’re counting as crucial.

(Note: Even so-called “numerical identity” causes these ant mill due to polysemy, that is, subtly different criteria people use for that term. The remedy is that it’s provisum-relative identity all the way down, unless and until one bootstraps a certain provisum as privileged; see next section.)

Gaining Ground via h-Logic

We’ve seen how h-Logic handles essence through definitional provisa, and how similar provisa provide specifying tools for designation & equivalence. If you’re a vibe reader, you might be sensing that these are “kinda the same stuff.”

Fine’s other major concern was grounding, the relation where one thing depends on or is explained by another. In h-Logic we can draw 2 kinds of grounding: Internal (which is trivial) and external (which we leverage for our acts of vindication, or justification, or authentication, or corroboration, or explanation, etc.).

Internal Grounding (Bootstrapping)

For any provisum h, and any formula φ that is one of h’s constraints,

⊨ □h φ

This is trivial, of course, since h necessitates its own constraints by stipulation. Every provisum internally grounds what it stipulates. Arithmetic grounds “2 + 2 = 4,” Roshambo grounds “rock beats scissors,” etc.

Q: “When would you bootstrap a provisum?”

A: When in the context you’re trusting and/or privileging it, taking it for granted to “try it on for size,” or taking it for granted because you have no other practical choice (like if a bear is chasing you). Gotta start somewhere.

External Grounding (Vindication)

Provisum h externally grounds provisum g when:

∀φ ∈ g: ⊨ □h φ

“For all constraints φ in g, h necessitates φ”

Here, h’s constraints independently necessitate g’s constraints. This is corroboration, showing that g’s stipulations follow from a different, already-bootstrapped provisum.

External grounding matters when you’re evaluating a provisum with a critical eye; when you’re interrogating it.

Crucially, external grounding doesn’t require that individual constraints from h necessitate individual constraints from g. The conjunction of h’s constraints might necessitate g’s constraints even when no single constraint does.

This is what emergence is, whether called “weak” or “strong”; there’s no innate difference, it’s provisum-relative:

E.g.,

LavaLandPremise = {

"Durdle Dwarves" framework,

"Lavafalls" ruleset,

200 x 200 board,

frame 1 has a total floor and 1 Dwarf atop,

process 4000 frames

}

LavaLandEvents = {

Groups of repeating spawns will give me the impression of cascades of lava,

These cascades will grow and shrink and whatnot,

At frame 500 a lone dwarf traverses beneath a low central bridge then swings back and drags along what amusingly looks like 'loot'

}(If the above animation isn’t working, click here.)

Again, no single constraint within h necessitates any of the constraints in g, but the conjunction of everything in h necessitates each thing we find in g.

Hence while h-Logic aids our specification, it’s not wizardry or something. You can’t without some effort draw the winning endgame moves against Magnus Carlsen from the Chess provisum alone. The entailments of a provisum are where legitimate work & discovery await, and why “predictable” (an “-ible/-able” word with variant success conditions, provisions, and restrictions!) is not some well-defined concept upon which to blithely hang a “strong/weak” taxonomy.

Finally, this vindication can go both directions. Just as two children can corroborate one anothers’ eyewitness provisum, physics theory can ground chemistry results and chemistry theory can ground physics results.

Traditional grounding theory recoils a bit at bidirectionality; it wants asymmetry like temporal priority or ontological dependence. But this demand comes from privileging frameworks where ‘building’ or ‘construction’ metaphors are familiar. h-Logic reveals this as optional, and to our advantage. If explanation-seeking is about epistemic enrichment and not innately about reductive spelunking, then we can just as easily put the LavaLand provisum under the interrogation lamp, take LavaLandEvents for granted (after all, we can see it happen), and then vindicate (or not!) the LavaLand provisum.

(Indeed, the animated GIF is cropped, so are you sure it came from a 200 x 200 playfield? Turns out I lied to you. It was from a 192 x 192 playfield. The result cannot happen on a 200x200 playfield, so it can falsify the deceptive provisum.)

Bidirectionality reminds us that open possibilities downstream are the same species as open possibilities upstream. A given “results” provisum can allow all sorts of upstream (temporally prior, constitutive, etc.) models, at which point we can seek further results to further constrain those models, or if we’re at a dead end, we can simply stop and weight those models according to the results we have — and if we choose to do so, this will present as foundational probabilism.

Notice how the boundaries between ontology & epistemology are blurring as we’re getting used “loosely typed” modal expression. Yet when we do so, our air is now fresh. This is all just provisum comparison & corroboration. Whereas traditional grounding theory gets stuck in endless debates about its nature & features, h-Logic sidesteps all this and says, “Start with provisa, then look at what vindicates what. Whether you call that ‘grounding,’ and whether it must be asymmetric, is up to you and/or what you’re privileging.”

This is a win because it shows that the grounding debate was underspecified all along. Different camps were just privileging different provisa for what “grounding” should do.

Handling Socrates & {Socrates}

Now we can tackle Fine’s problem: “It’s necessary that Socrates ∈ {Socrates}, but this shouldn’t count as essential to Socrates; rather, it’s essential to the set.”

With h-Logic, we can just define essence provisa for both:

EssenceOfSocrates = {

human,

ancient Greek,

thoughtful,

mortal,

...

}

EssenceOfSocratesSet = {

is a set,

has Socrates as its only member

}Which gives us:

¬□EssenceOfSocrates(Socrates is the only member of some particular set)

“It’s not essential to Socrates that some set has him as sole member.”□EssenceOfSocratesSet(Socrates is the only member)

“But it is essential to {Socrates} that Socrates is its only member.”

The membership relation is a logical tautology (X ∈ {X} holds in every model), so no provisum can deny it, but Fine’s insight wasn’t about that, it was about “what explains what.” h-Logic captures this through vindicating provisa: The fact that {Socrates} has Socrates as a member is vindicated by EssenceOfSocratesSet (which specifies the set’s membership criteria), not by EssenceOfSocrates (which specifies what makes Socrates Socrates). So the necessity traces to the set’s essence, not to Socrates’s essence.

Persuasive Arguments as “Impelling” or “Inert”

Reframing questions of essence & grounding as questions of provisa & comparison provides us with new language to reflect “what we’re up to” when we do persuasive argumentation, and when informal fallacies matter and why.

A “valid” argument is when the conclusion follows from the premises.

Yet this is a rather low bar. “The god Tpotato exists; Ergo, the god Tpotato exists,” is completely valid, but it’s not going to convince the Tpotato unbeliever.A “sound” argument is a valid argument whose premises are in fact true.

But this standard is oblivious to fallibilism, and certainly won’t work with our h-Logic project, where we’re bouncing both the T modal axiom and the T of “JTB”-knowledge (big-T “Truth”).

Instead, we’re looking for something “in between,” something that give us the oomph of “sound” without the quaint expectations of big-T “Truth” accessible to participants.

So let’s run “impelling”: A critical pump that draws a good faith interlocutor “in” to a different position.

An argument is “impelling” when it reveals an inconsistency within an interlocutor’s provisum, showing that commitments they accept lead to conclusions they reject, in turn pressuring revision of some kind.

Suppose your friend has two provisa, h and g. h contains {N, O, P, Q}, and g contains {¬R}. If you can show that □hR (not an explicit constraint of h, but an entailment or an emergent product or whatever), then this creates pressure to retreat from h and/or g in some way, or to defeat your demonstration, on pain of no admissible models per the conjunction of h and g.

If however your argument does not create an inconsistency, then you aren’t pulling your friend anywhere; your friend continues to go on his present inertia. An argument that is not impelling is thus “inert.”

Example: The Questionable Vegan

Your friend Jesse self-identifies as vegan.

h = {

Jesse is vegan,

vegans don't order animal products,

eggs are animal products

} At breakfast, they’re about to order scrambled eggs. But □h¬(Jesse orders eggs). With the language of h-Logic, you can inform Jesse that this provisa conjoined with their behavior admits no models. (That totally wouldn’t be socially awkward.)

Or maybe something like, “Hey, you’re not practicing what you preach.” Basically, you’re impelling them away from an apparent inconsistency by calling attention to it. This does not require that “vegan” have a purely objective definition, it does not require agreeing with veganism’s requirements. It doesn’t require that any of these be strictly “True” as soundness demands. Yet still, there’s “oomph”: Jesse is under pressure to revise something, or at least you think they are (I didn’t exactly say whether Jesse holds to h).

Here are some dialogue tree options for Jesse:

Drop “Jesse is vegan.”

“Oh, I guess I’m not actually vegan; I’m vegetarian.”Drop “Jesse orders eggs”

“Oh yeah, I shouldn’t order eggs. This is taking some getting used to.”Drop “vegans don’t eat eggs”

“I’m a veggan, which I consider a type of vegan per my taxonomy. If you don’t like that, pound sand.”Drop “eggs are animal products”

“When I think ‘animal products’ I think stuff where they have to, like, actually die.”

(Note on “costs”: Some of the above might look rather dubious. If you want to scrutinize (or celebrate) those revisions per other provisa, go nuts; the “cost/benefit” of each one will be per those provisa. If Jesse goes #2, they get some cred with their vegan friends but lose their favorite breakfast; if Jesse goes #4, they lose social cred with anyone who privileges the conventional standards for that term. Verily, we don’t need to have any pretenses of standardless standardization. This is the same move we did earlier re: “similarity” and “relevance.” h-Logic turns us away from the doors marked “underspecification” behind which looms an endless discursive labyrinth, from which there is no escape, save for backtracking.)

Savvy readers will observe that this all resembles an “internal critique,” when you magnanimously take the interlocutor’s beliefs for granted but follow it up with an “inconsistency” sucker punch. But it doesn’t have to be internal to the interlocutor; it can just be internal to the conjunction of shared beliefs among discussion participants. (Or shared confidences; remember that we can reframe bivalent h-Logic in terms of 0-1 probability, if we want.)

This explains why persuasion isn’t just about presenting sound arguments. It’s about presenting arguments whose premises are held by the target of persuasion, but where valid conclusions from those premises are rejected by that target, inviting the target to revise their set of premises in some way.

(Note: It’s important to note that a conclusion can be a bundle of some proposition plus a level of conviction in that proposition. If the target has some confidence level about proposition φ, a valid conclusion from accepted premises that raises or lowers confidence in φ would be impelling.)

To recap, this is how it works:

Let h be some of the interlocutor's accepted commitments (that we’re chill with… at least, for now) and g be further commitments they have of which we want to disabuse them.

An argument is impelling relative to (h, g) when there exists some φ such that □h φ, yet ¬φ ∈ g, ergo W(h ∪ g) = ∅. Here the interlocutor's total commitments admit no models, creating rational pressure to revise h, revise g, or contest either □h φ and/or ¬φ ∈ g.

An argument is inert relative to (h, g) when its conclusion is either already in h (preaching to the choir), not entailed by premises the interlocutor accepts (begging what the interlocutor considers a controversial question), or consistent with g (no inconsistency bothering them).

My motivation to push for new terms, and the use of h-Logic to “set-ify” premises, is because of patterns of discursive quicksand that I’ve seen haunting popular discourse, for decades, even among folks I’d expect to “know better”:

Foul I. Treating validity as a mic drop.

Foul II. Demanding soundness when diagnosing the “Truth” of the premises is untenable, or just way out of scope.

Foul III. Suggesting that making an internal critique requires signing on to one or more of its premises, in a bumbling attempt at a reverse internal critique (e.g., “Your theodicean challenge requires that moral objectivism is true, which you reject!”).

Foul IV. Invoking principles & heuristics that the target does not accept due to noncommitment (e.g., PSR, naturalism, conceptual absolutism, etc.).

Foul V. Invoking underspecified principles & heuristics (e.g., “simplicity” or “parsimony”) whose specifications the target does not accept.

Foul VI. Invoking conceptual criteria that the target does not accept (e.g., some definition of God and its downstream conceptual demands, if accepted).

Foul VII. Treating “metaphysical modality” as a single modality with determinant meanings of possibility & necessity, even though their provisions are up in the air & continually interrogated.

Foul VIII. Doing 1 of the above 4 things (begging controversial items), then getting pedantic & defensive when their opponent says they’re begging questions (“I’m not technically begging the question…”).

Are you as exhausted by these VIII Fouls as I am? Heck yeah you are.

h-Logic demands adequate specification, and “impelling” sets the bar, an exorcist for debates accursed with such distractions.

And now, for our last section on philosophical payoffs, we’ll see how setting aside big-T “Truth” (which we’re doing for “impelling/inert”) gives h-Logic a funny ability:

Expressing Different Truth Theories